As I’ve mentioned, I don’t know much about the firmware modding community. It is amazing the things they can do to a system, in ways completely unrelated to security. 🙂 But other readers of this blog are accomplished firmware modders, and one of the smarter ones have suggested a new tool to mention on the blog: GOPupd.

UBU has already been mentioned in previous blog posts, it was done by the win-raid.com forum members, as is this other tool. GOPupd is also hosted on the win-raid.com forum by one if it’s members, LordKAG, who you may have noticed as one of source of many of UEFItools bug reports.

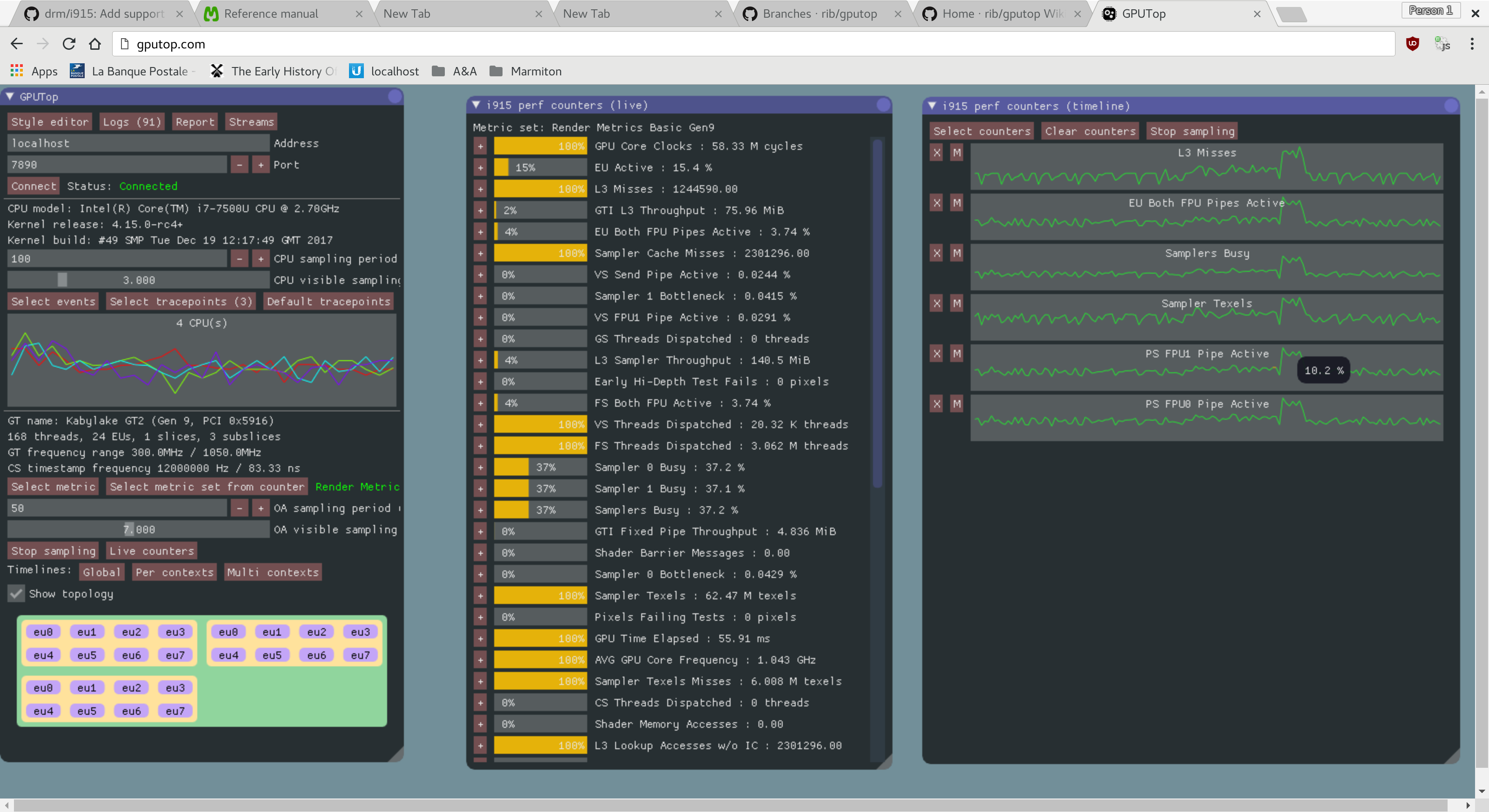

GOPupd is a tool that updates a GOP portion of VideoBIOS dumped from various AMD/ATI and Nvidia graphic cards. Advanced users can use the tool to not only dump, but also can insert a GOP into a VBIOS without it, basically making an older GPU compatible to pure UEFI (non-CSM) mode. That sounds like a risky operation, but it appears that many readers of this blog are smarter than the writer of this blog, so I presume a few of you would be able to handle this, I’m not sure I would. 🙂 The tool is written in Python. You have to register to the forum to get access to their download URLs.

[…] If you are interested in this thread, then you should know a thing or two about GOP. If you need/want pure UEFI Boot (CSM disabled) or Fast Boot, then you need a GOP for your GPU/iGPU, otherwise it is optional (for now). For the iGPU side there is not much you can do, because manufacturers have included them in the UEFI firmware, with GOP drivers from Intel, AMD, ASPEED, Nvidia (recently) and even Matrox. This thread only deals with external cards and only with AMD and Nvidia. This is further limited by the fact that only specific generations have GOP support: for AMD there is a list of IDs in each GOP version, but it is safe to assume that every card after 7xxx generation should work, maybe even 6xxx; for Nvidia there are 6 generations supported – GT21x, GF10x, GF119, GK1xx/GK2xx, GM1xx, GM2xx. […]

http://www.win-raid.com/t892f16-AMD-and-Nvidia-GOP-update-No-requests-DIY.html

http://www.win-raid.com/u369_lordkag.html